Hardware

Power Protection

| Device | Model | Protected Equipment | Capacity |

|---|---|---|---|

| UPS #1 | CyberPower | Dell R720 | 1500VA |

| UPS #2 | CyberPower | Mini PCs + Network | 1000VA |

Network Stack

| Device | Model | Specs | Purpose |

|---|---|---|---|

| ONT | Huawei | 1GbE | ISP Gateway |

| Firewall | XCY X44 | 8× 1GbE | pfSense Router |

| WiFi | TP-Link AX3000 | WiFi 6 | Wireless AP |

| Switch | TP-Link | 24-port | Core Switch |

Compute

Note

On-Premise Hardware

| Device | CPU | RAM | Storage | Purpose |

|---|---|---|---|---|

| Beelink GTi 13 | i9-13900H (14C/20T) | 64GB DDR5 | 2× 2TB NVMe | Proxmox |

| OptiPlex #1 | i5-6500T (4C/4T) | 16GB DDR4 | 128GB NVMe / 2TB | Proxmox |

| OptiPlex #2 | i5-6500T (4C/4T) | 16GB DDR4 | 128GB NVMe / 2TB | Proxmox |

| Dell R720 | 2× E5-2697v2 (24C/48T) | 192GB ECC | 4× 960GB SSD | Backup Server |

| Synology DS223+ | ARM RTD1619B | 2GB | 2× 2TB RAID1 | NAS/Media |

Tip

Cloud Infrastructure

| Provider | Instance | Specs | Location | Purpose |

|---|---|---|---|---|

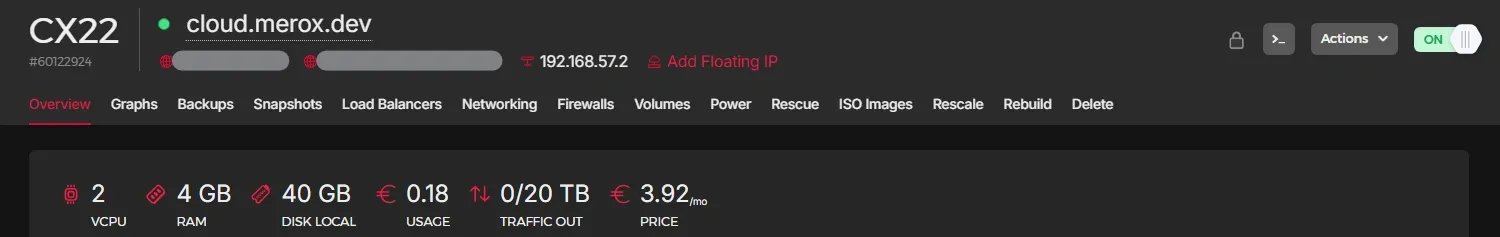

| Hetzner | CX22 | 4vCPU/8GB/80GB | Germany | Off-site Backup |

| Oracle | Ampere A1 | 4vCPU/24GB/200GB | USA | Docker Test |

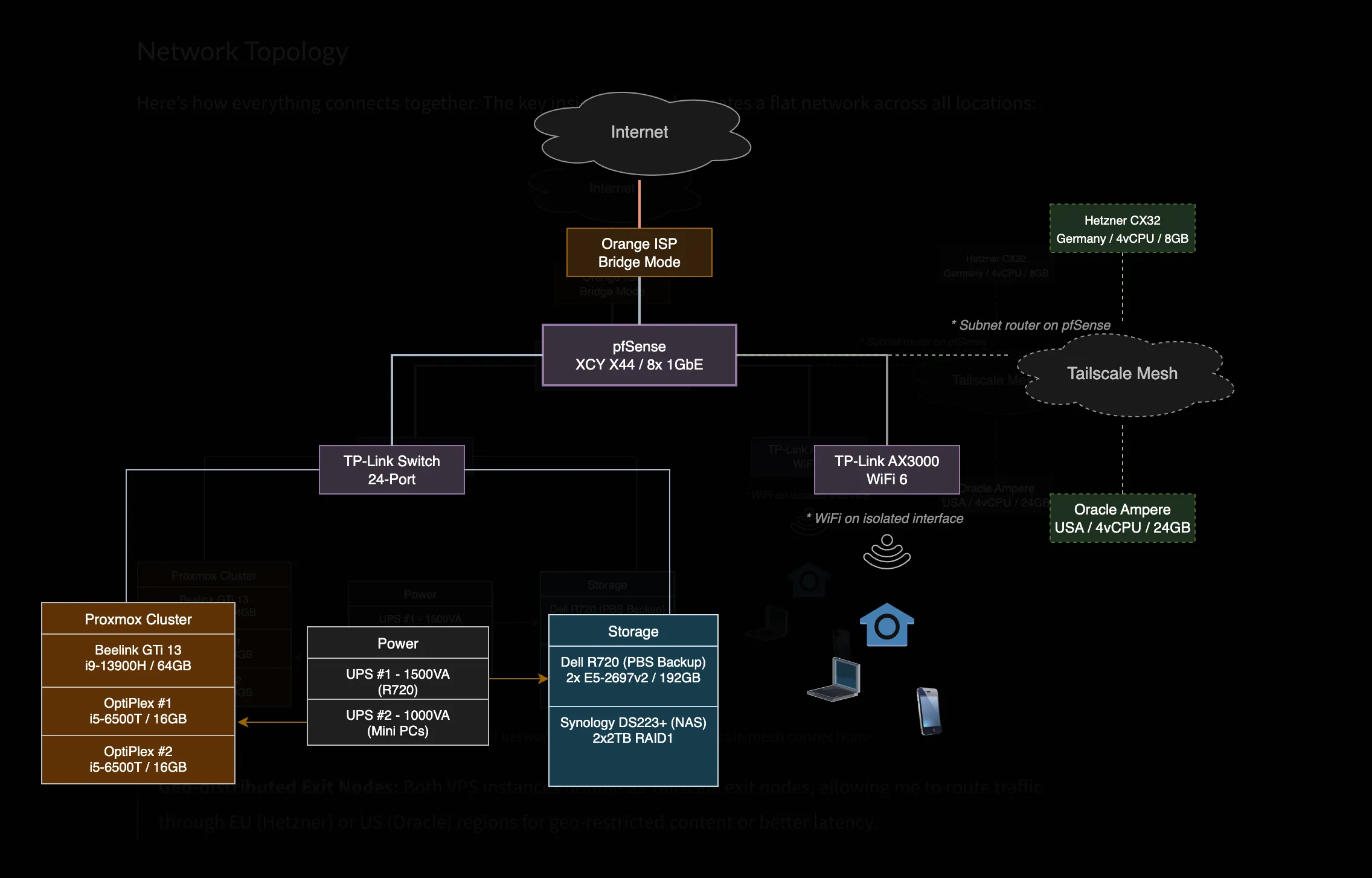

Network Architecture

Three dedicated physical interfaces on pfSense:

WAN Interface → Orange ISP (Bridge Mode)LAN Interface → Homelab NetworkWiFi Interface → Guest/IoT IsolationWarning

WiFi clients are firewalled from homelab services, except whitelisted ones like Jellyfin.

Tailscale creates a flat network across all locations — homelab, Hetzner, and Oracle all appear on the same mesh.

Tip

Both VPS instances double as Tailscale exit nodes, so I can route traffic through EU (Hetzner) or US (Oracle) for geo-restricted content or lower latency to specific regions.

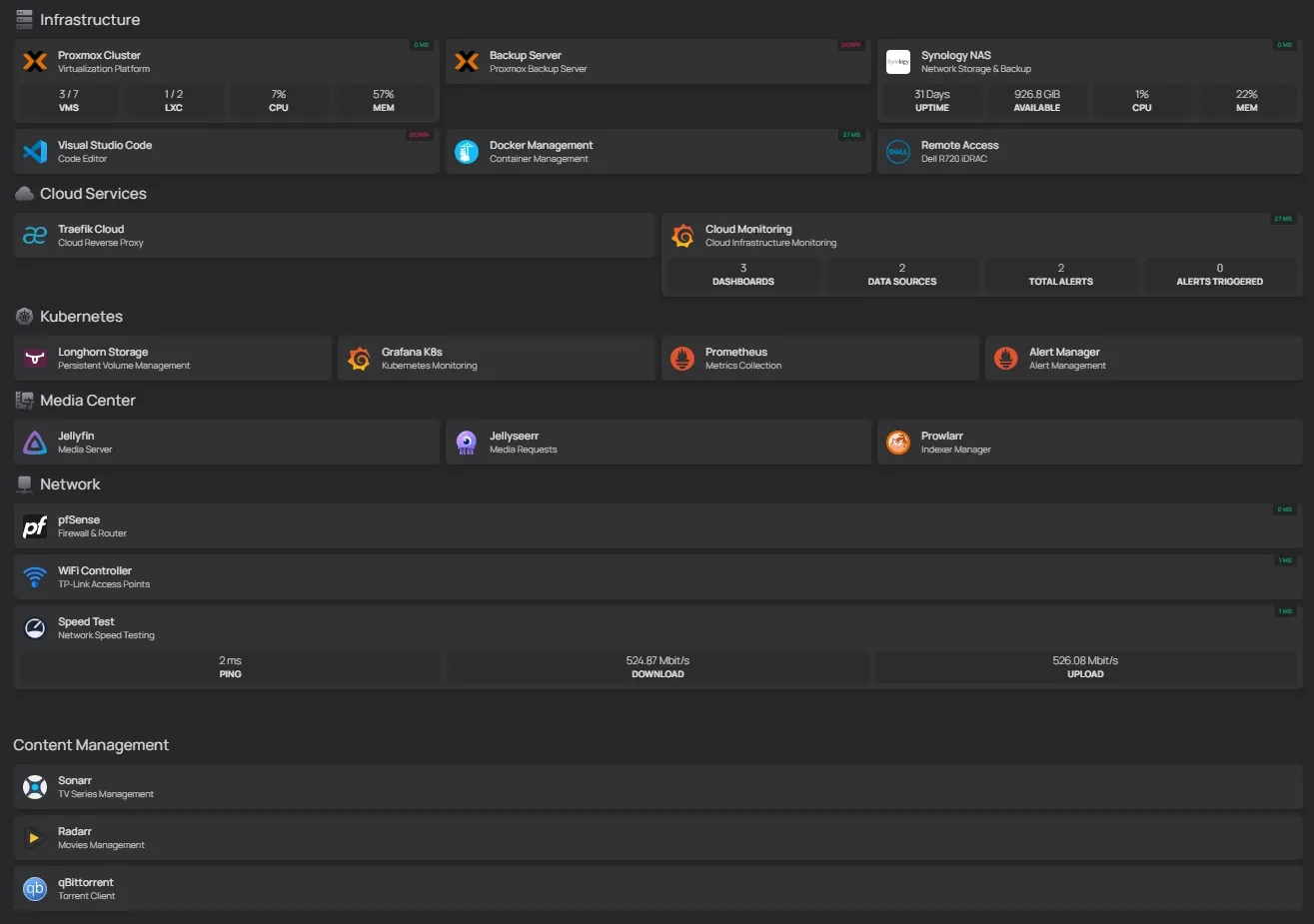

Infrastructure

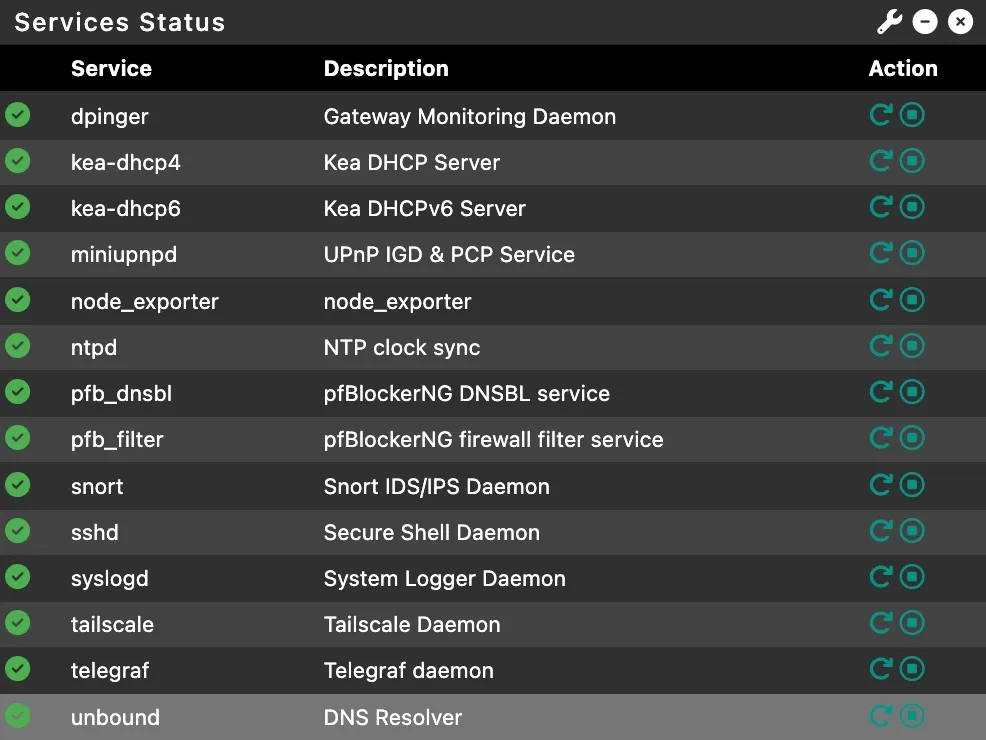

pfSense

A fanless mini PC from AliExpress (~200€) running pfSense for 3+ years: 👉 XCY X44 on AliExpress

Tailscale Subnet Router exposes the entire homelab to cloud VPS without installing Tailscale on every device. Also the solution to CGNAT — when your ISP doesn’t give you a public IP, this gets you in. Setup guide →

Unbound DNS runs as a local recursive resolver with domain overrides for *.k8s.merox.dev pointing to K8s-Gateway.

Telegraf pushes system metrics to Grafana.

Firewall rules: WiFi → LAN blocks everything except whitelisted apps; LAN → WAN allows all; WAN → Internal blocks all except explicitly exposed services.

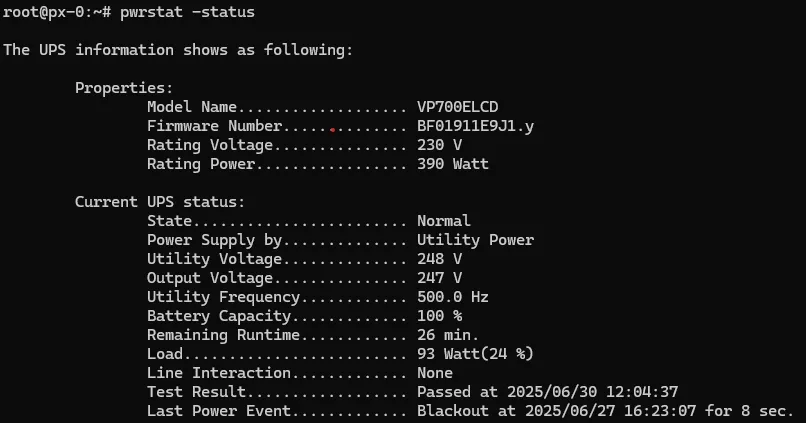

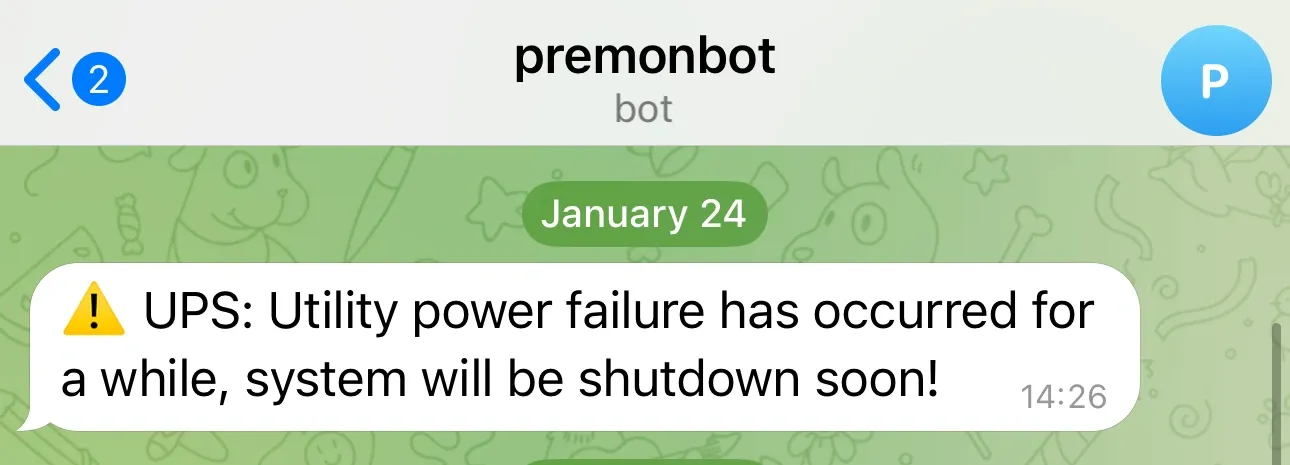

UPS Power Management

The CyberPower 1000VA covers all mini PCs and network gear. When power fails, it triggers a cascading shutdown — Kubernetes nodes drain properly before Proxmox hosts go down.

| Feature | Implementation | Purpose |

|---|---|---|

| pwrstat | USB to GTi13 Pro | Automated shutdown orchestration |

| SSH Scripts | Custom automation | Graceful cluster shutdown |

| Monitoring | Telegram alerts | Real-time power notifications |

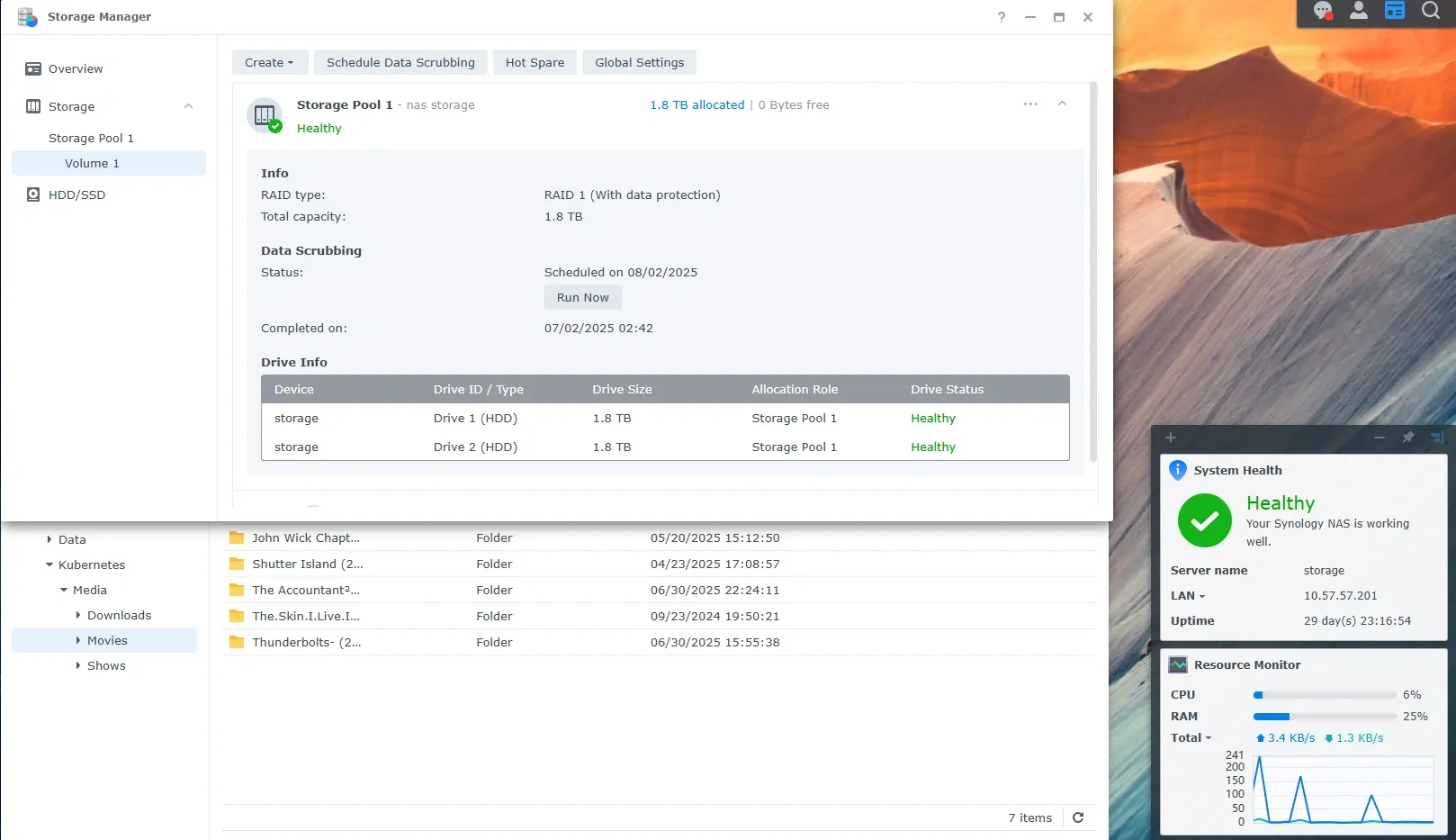

Synology DS223+

Dual purpose: NFS/SMB shares for the ARR stack (still experimenting with both protocols), and personal cloud via Synology Drive.

After 3 years of self-hosting Nextcloud, I switched. Better performance, native mobile apps that actually work, and zero maintenance. Sometimes the best self-hosted solution is the one you never have to think about.

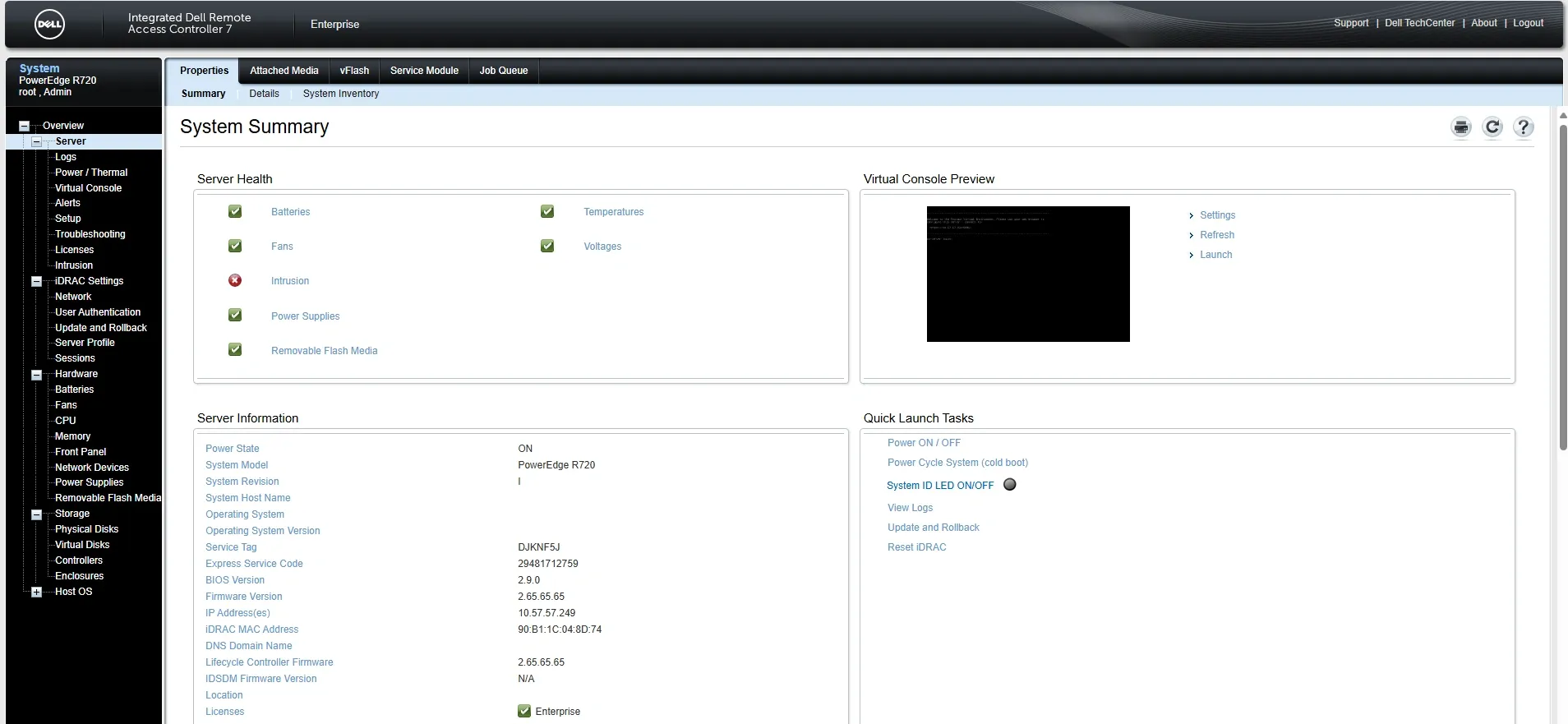

Dell R720

The power-hungry workhorse. Its role has changed a few times:

| Period | Purpose | Configuration |

|---|---|---|

| Phase 1 | Proxmox hypervisor | 24C/48T, 192GB RAM |

| Phase 2 | AI Playground | Quadro P2200 + Ollama + Open WebUI |

| Current | Backup Target | 4× 960GB RAID-Z2, weekly MinIO sync |

The most interesting project was flashing the PERC controller to IT mode — bypasses hardware RAID so the OS sees drives directly. Fohdeesha’s crossflash guide covers H710/H310 and more.

At ~200W idle, running 24/7 would cost ~€20/month in electricity. Instead: Wake-on-LAN 1–2× weekly, pull MinIO backups from Hetzner, done.

Warning

Still constantly changing my mind about what to run here.

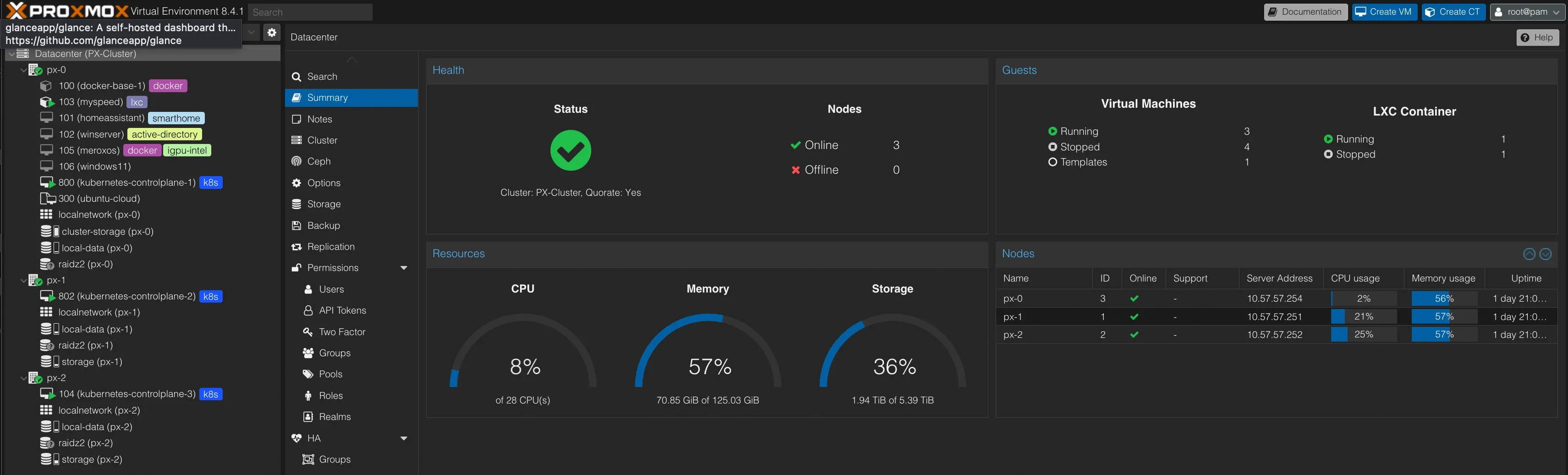

Proxmox Cluster

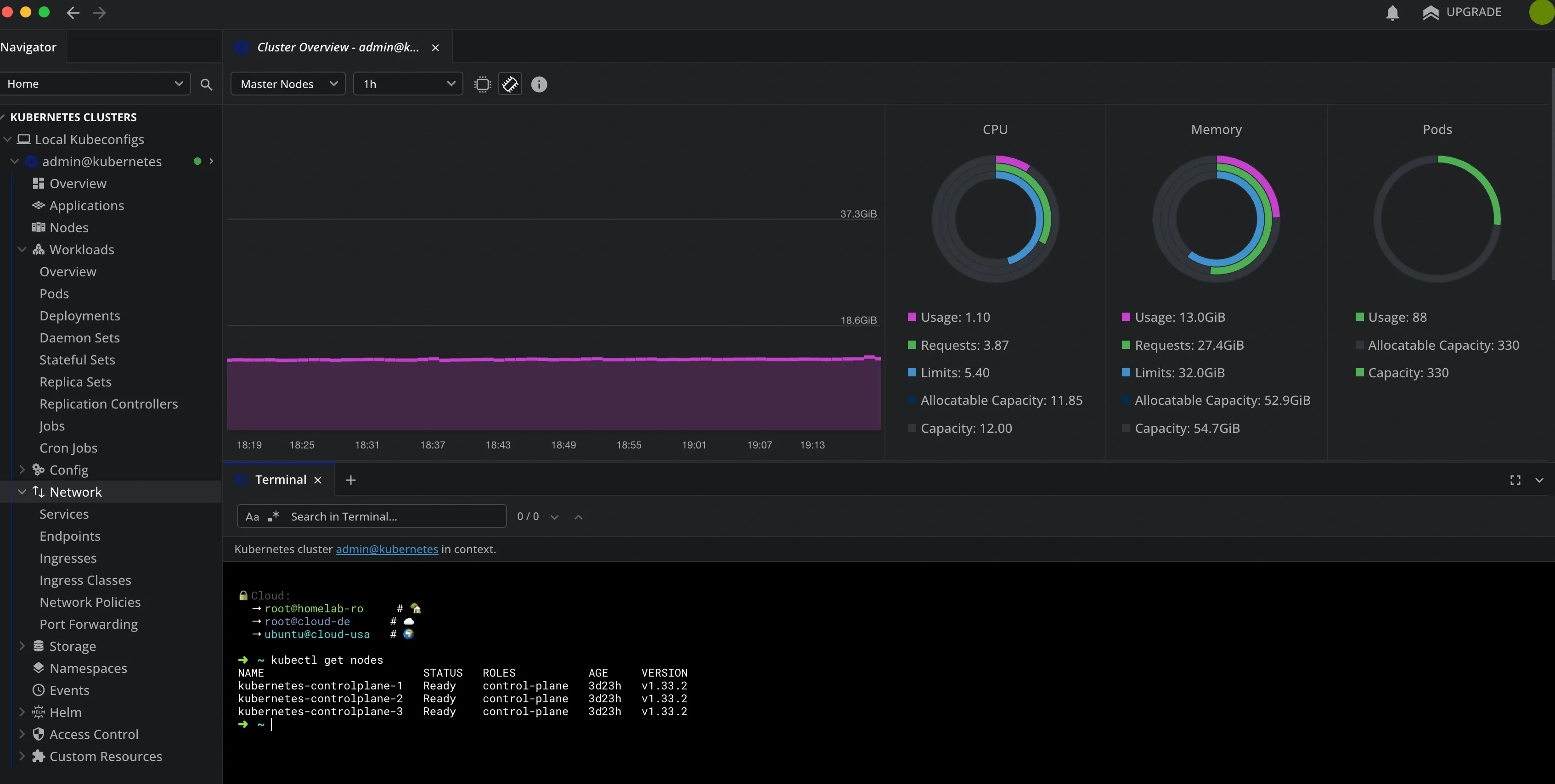

Three-node cluster across the mini PCs. Each node runs one Talos VM, so Kubernetes has HA across physical hosts with no single point of failure.

Current VMs:

| VM | Purpose | Specs | Notes |

|---|---|---|---|

| 3× Talos Kubernetes | K8s nodes | 4vCPU/16GB/1TB | Intel iGPU passthrough |

| meroxos | Docker host | 4vCPU/8GB/500GB | For simpler services |

| Windows Server 2019 | AD Lab | 4vCPU/8GB/100GB | Active Directory experiments |

| Windows 11 | Remote desktop | 4vCPU/8GB/50GB | Always-ready Windows machine |

| Home Assistant | Home automation | 2vCPU/4GB/32GB | |

| Kali Linux | Security testing | 2vCPU/4GB/50GB | To be restored from backup |

| GNS3 | Network lab | 4vCPU/8GB/100GB | To be restored from backup |

Home Assistant is intentionally minimal for now. The most interesting automation: location-based Dell R720 fan control — quieter when I’m home, ramped up when away. Details →

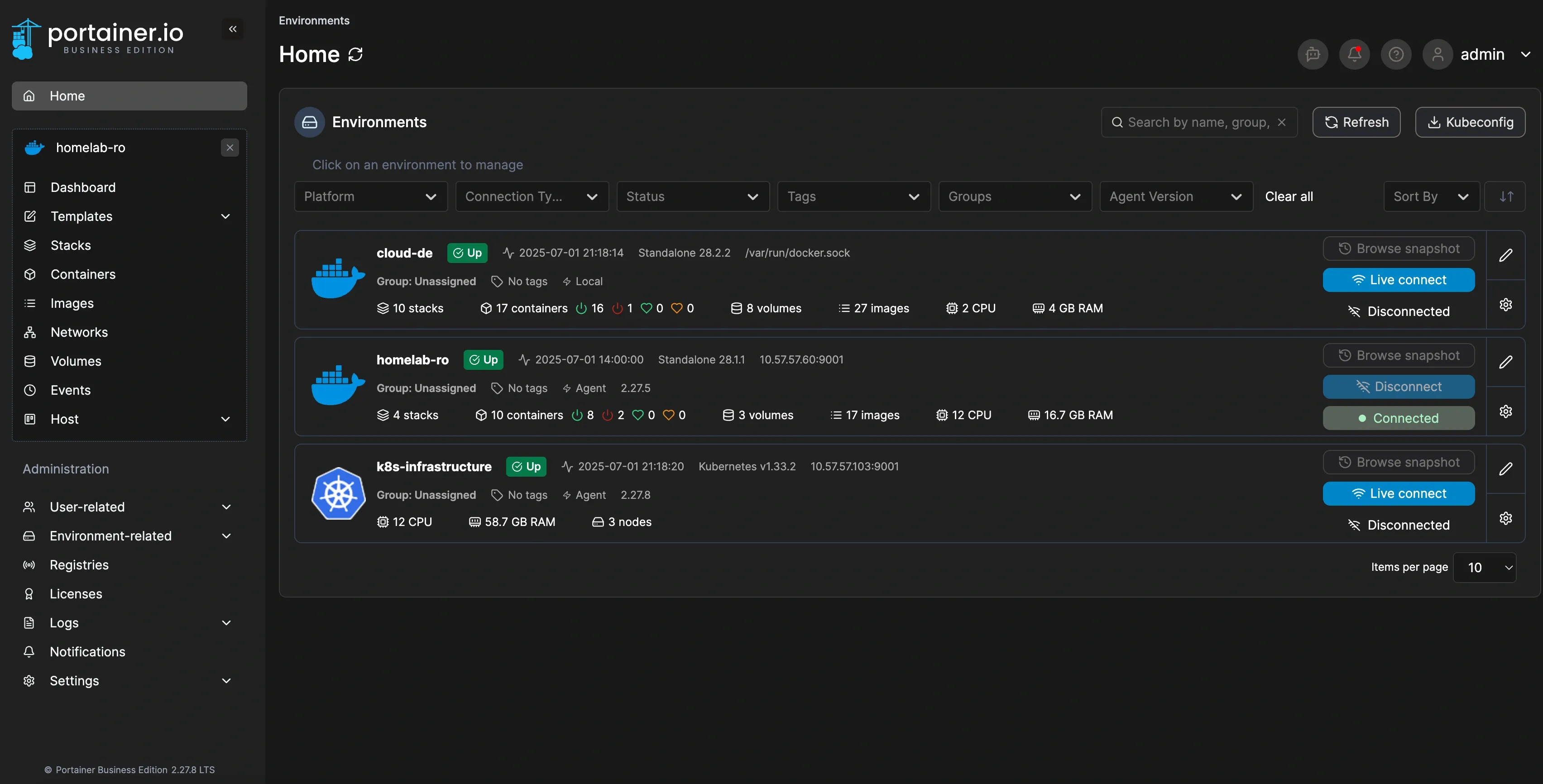

Cloud

All managed through a single Portainer instance at cloud.merox.dev:

cloud-de (Hetzner CX22, ~€4/month) — always-on, external monitoring for when the homelab is down:

| Service | Purpose |

|---|---|

| Grafana + Prometheus + Alertmanager | External homelab monitoring |

| Pi-hole | Dedicated Tailscale split-DNS |

| Traefik | SSL for all VPS services |

| Guacamole | Remote access via Cloudflare Tunnel |

| Firefox container | GUI access via Guacamole |

homelab-ro (local Docker) — the escape hatch when Kubernetes complexity becomes too much:

| Service | Purpose |

|---|---|

| ARR Stack | Quick restore when Kubernetes fails |

| Netboot.xyz | PXE / network boot |

| Portainer Agent | Remote Docker management |

Because sometimes you just need things to work without debugging YAML manifests at 2 AM.

cloud-usa (Oracle Free Tier) — test ground for experimental images and US Tailscale exit node. Not in Portainer — hit the 5-node limit with 3× Kubernetes + 2× Docker.

Talos & Kubernetes

Fair warning: this is where I went full “because I can” mode. If you just want to run services, Docker is the right answer. But if you want to learn enterprise-grade container orchestration in your homelab, keep reading.

The starting point: onedr0p/cluster-template

Talos OS was the first immutable, declarative OS I’d run. After a few days of troubleshooting, I was sold.

Tip

Why Talos over K3s? Immutable OS means less maintenance, GitOps-first design, declarative everything, and it’s closer to what you’d run in production.

My infrastructure repo: 👉 github.com/meroxdotdev/infrastructure

Key customizations:

| Component | Modification | Reason |

|---|---|---|

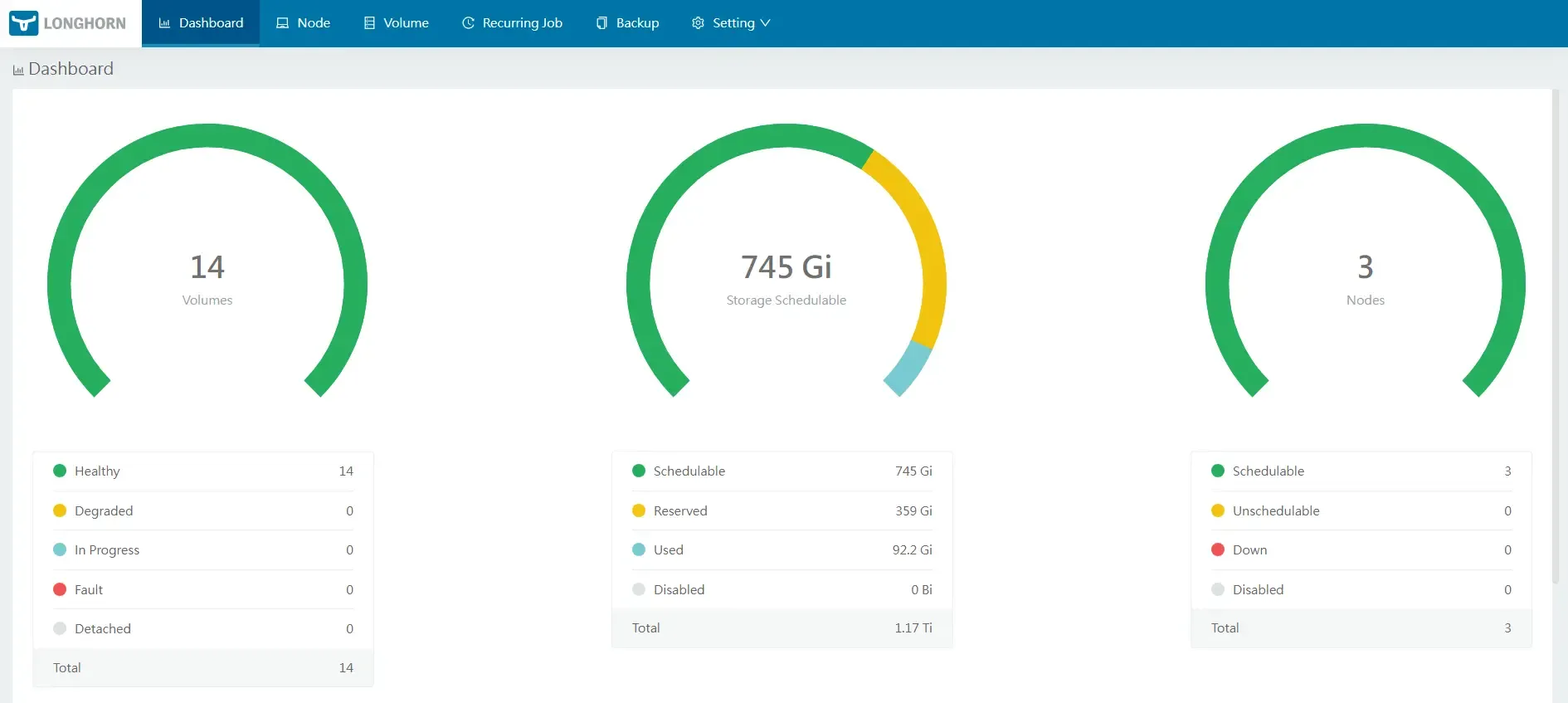

| Storage | Longhorn CSI | Simpler PV/PVC management |

| Talos Patches | Custom machine config | Longhorn requirements |

| Custom Image | factory.talos.dev | Intel iGPU + iSCSI support |

The custom Talos image includes Linux driver tools, iSCSI-tools for network storage, and Intel iGPU drivers for Proxmox passthrough.

GitOps structure:

infrastructure/kubernetes/apps/├── storage/ # Longhorn configuration├── observability/ # Prometheus, Grafana, Loki (WIP)└── default/ # Production workloads

Day-to-day management with Lens:

Deployed apps:

| App | Purpose | Notes |

|---|---|---|

| Radarr | Movie automation | NFS to Synology |

| Sonarr | TV automation | NFS to Synology |

| Prowlarr | Indexer manager | Central search |

| qBittorrent | Torrent client | ⚠️ Use v5.0.4 for GUI config |

| Jellyseer | Request management | Public via Cloudflare |

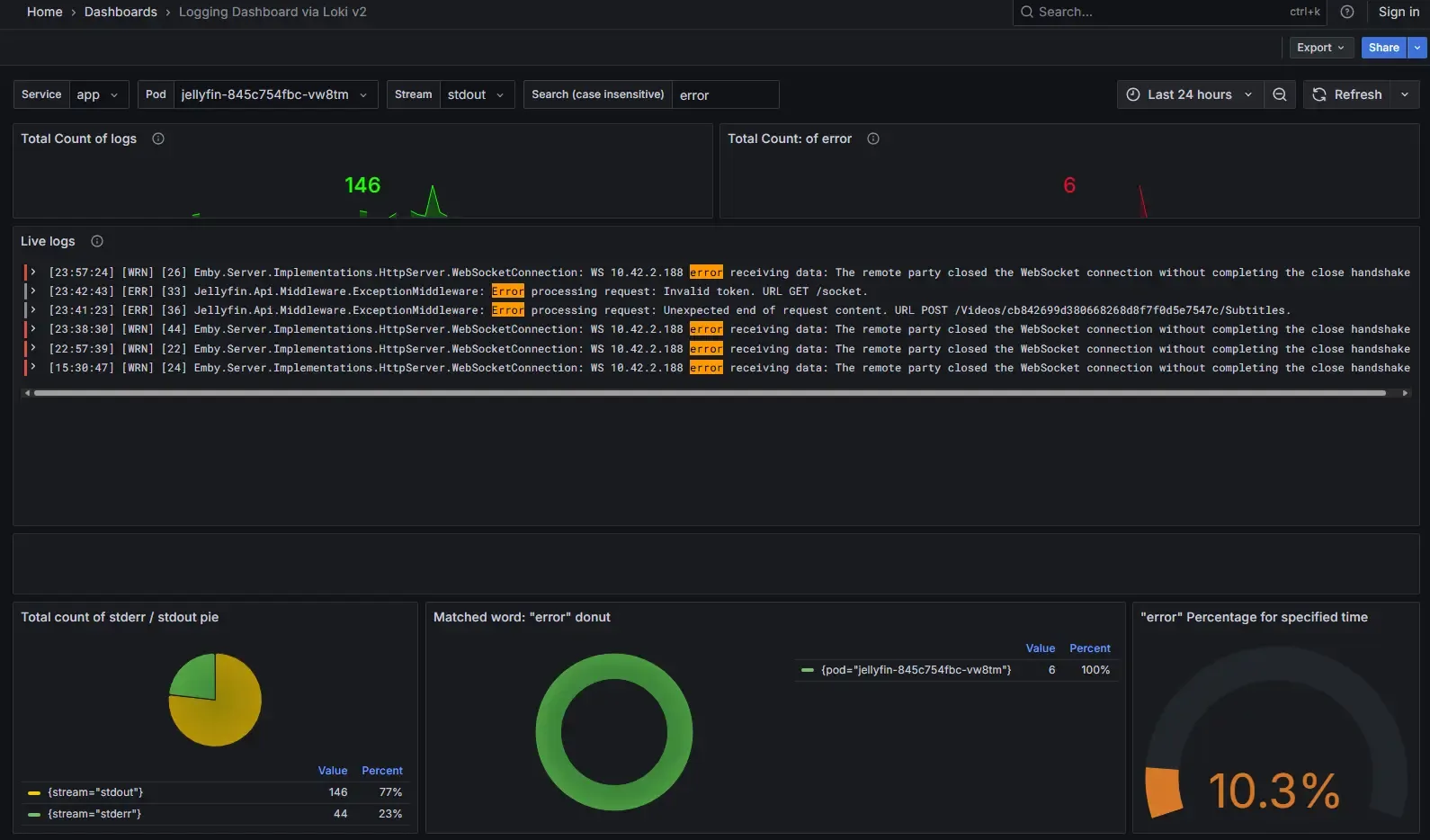

| Jellyfin | Media server | Intel QuickSync enabled |

| Homepage | Dashboard | Still organizing… |

With this setup I can fully rebuild the cluster in 8–9 minutes — declarative config for everything, GitOps workflow with Flux, Renovate bot keeping dependencies updated.

Warning

Keep your SOPS keys backed up separately. You’ll need them to decrypt the repository when rebuilding from scratch.

Backup Strategy

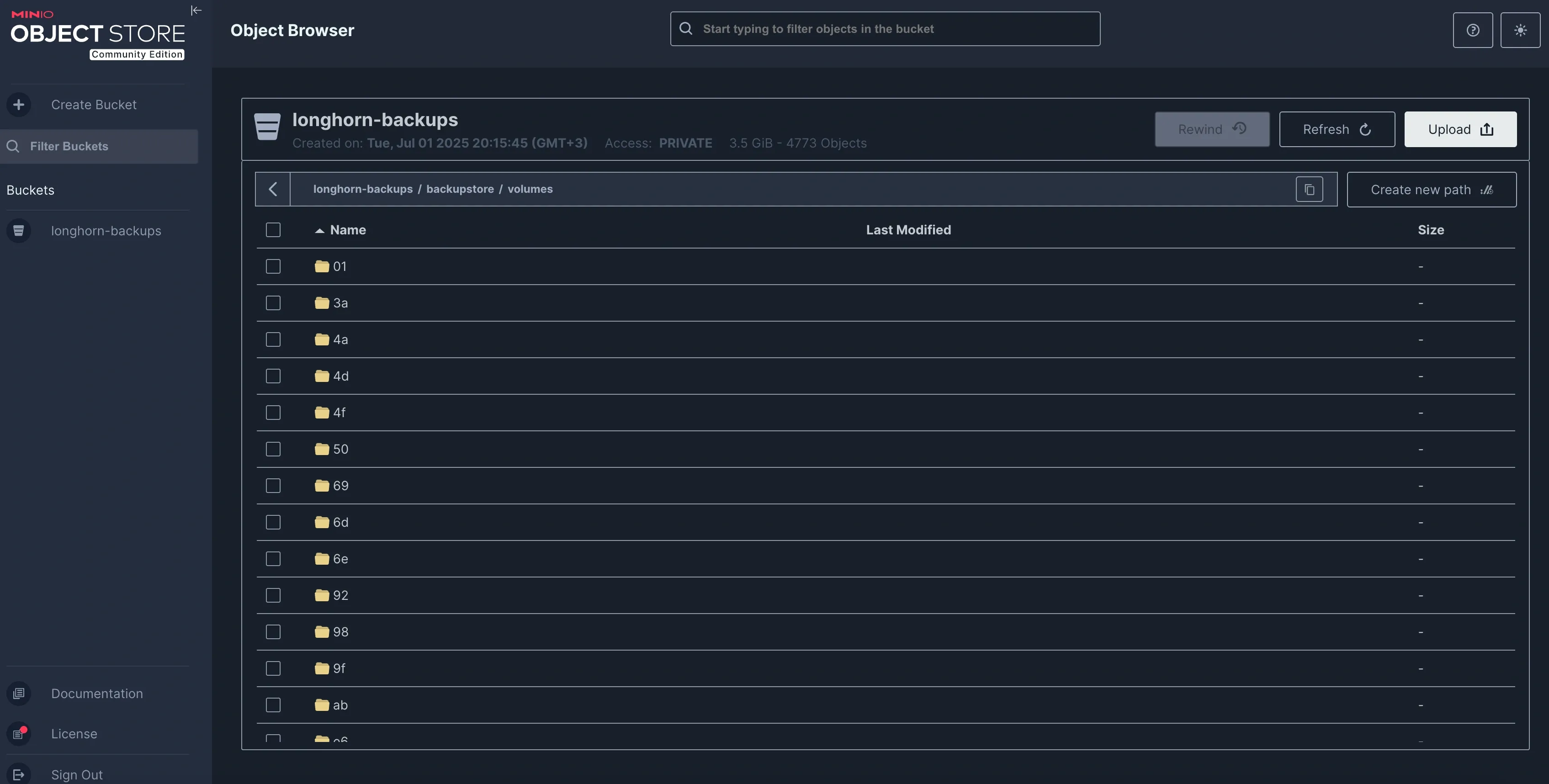

- Longhorn PVCs → daily backup to MinIO on R720

- MinIO on R720 → weekly sync to Hetzner Storagebox

Complete 3-2-1 across local, on-prem, and offsite. For full details on the backup chain and recovery procedures, see Safeguarding My Critical Data.

For cluster deployment details, the onedr0p/cluster-template README is surprisingly well written and worth following directly.