Hardware

Compute

| Device | CPU | RAM | Storage | Purpose |

|---|---|---|---|---|

| Beelink GTi 13 | i9-13900H (14C/20T) | 64GB DDR5 | 2× 2TB NVMe | Proxmox (px-0) |

| OptiPlex #1 | i5-6500T (4C/4T) | 32GB DDR4 | 128GB NVMe | Proxmox (px-1) |

| OptiPlex #2 | i5-6500T (4C/4T) | 32GB DDR4 | 128GB NVMe | Proxmox (px-2) |

| Synology DS223+ | ARM RTD1619B | 2GB | 2× 2TB RAID1 | NAS/Media |

Network Gear

| Device | Model | Specs | Purpose |

|---|---|---|---|

| ONT | Huawei | 1GbE | ISP Gateway |

| Firewall | XCY X44 | 8× 1GbE | pfSense Router |

| WiFi | TP-Link AX3000 | WiFi 6 | Wireless AP |

| Switch | TP-Link | 24-port | Core Switch |

Power Protection

| Device | Model | Protected Equipment | Capacity |

|---|---|---|---|

| UPS #1 | CyberPower | Mini PCs (Proxmox cluster) | 1500VA |

| UPS #2 | CyberPower | Network gear | 1000VA |

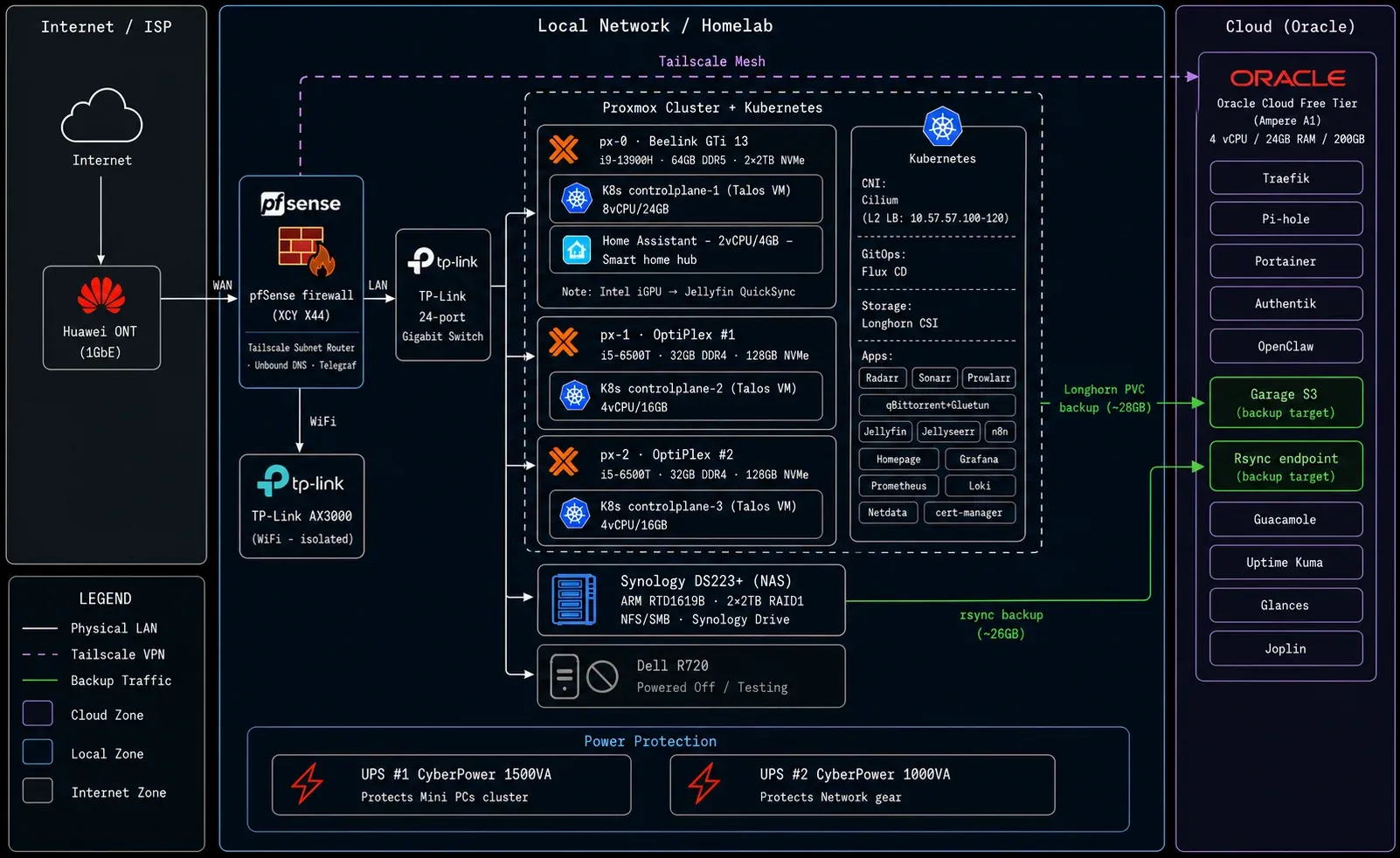

Network

Three dedicated physical interfaces on pfSense:

WAN Interface → Orange ISP (Bridge Mode)LAN Interface → Homelab NetworkWiFi Interface → Guest/IoT IsolationWarning

WiFi clients are firewalled from homelab services, except whitelisted ones like Jellyfin.

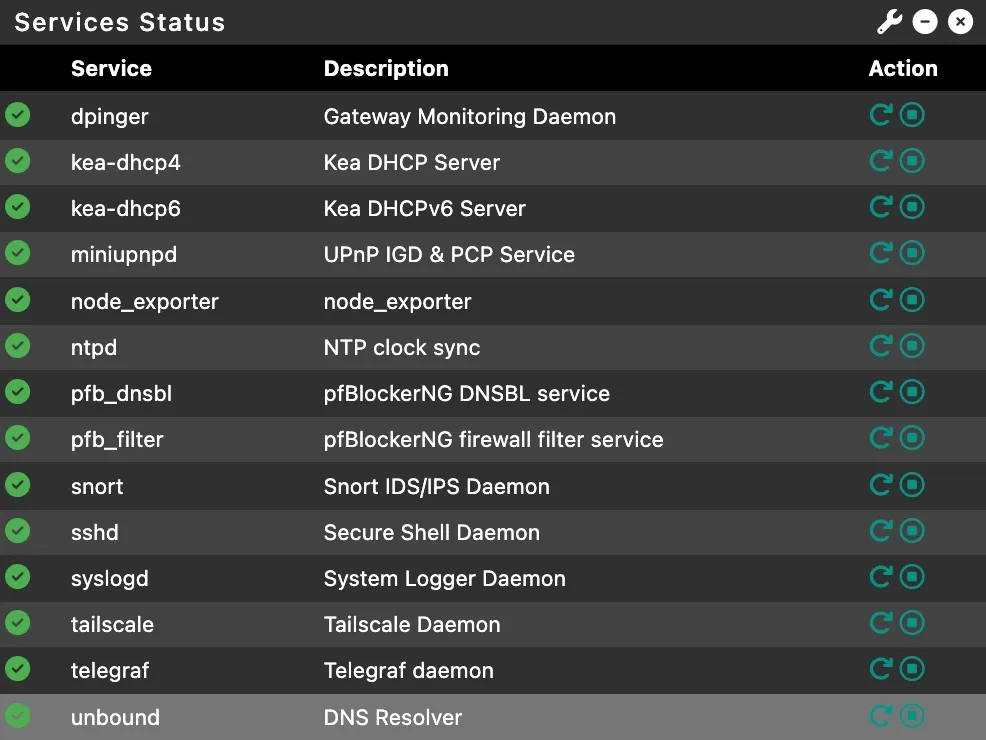

pfSense

A fanless mini PC from AliExpress (~200€) running pfSense for 3+ years: XCY X44 on AliExpress

Tailscale Subnet Router exposes the entire homelab to the cloud VPS without installing Tailscale on every device. Also the solution to CGNAT — when your ISP doesn’t give you a public IP, this gets you in. Full setup guide →

Unbound DNS runs as a local recursive resolver with domain overrides for *.k8s.merox.dev pointing to K8s-Gateway.

Telegraf pushes system metrics to Grafana.

Firewall rules: WiFi → LAN blocks everything except whitelisted apps; LAN → WAN allows all; WAN → Internal blocks all except explicitly exposed services.

Tailscale Mesh

Tailscale creates a flat network across all locations — the homelab rack and the Oracle Cloud VPS both appear on the same mesh. No VPN tunnels to configure, no firewall holes to punch.

Tip

The Oracle instance doubles as a Tailscale exit node — useful for routing traffic through the US when needed.

The Subnet Router on pfSense means every device in the homelab (including things like the Synology and iDRAC interfaces) is reachable from anywhere on the mesh without touching their individual configs.

Virtualization

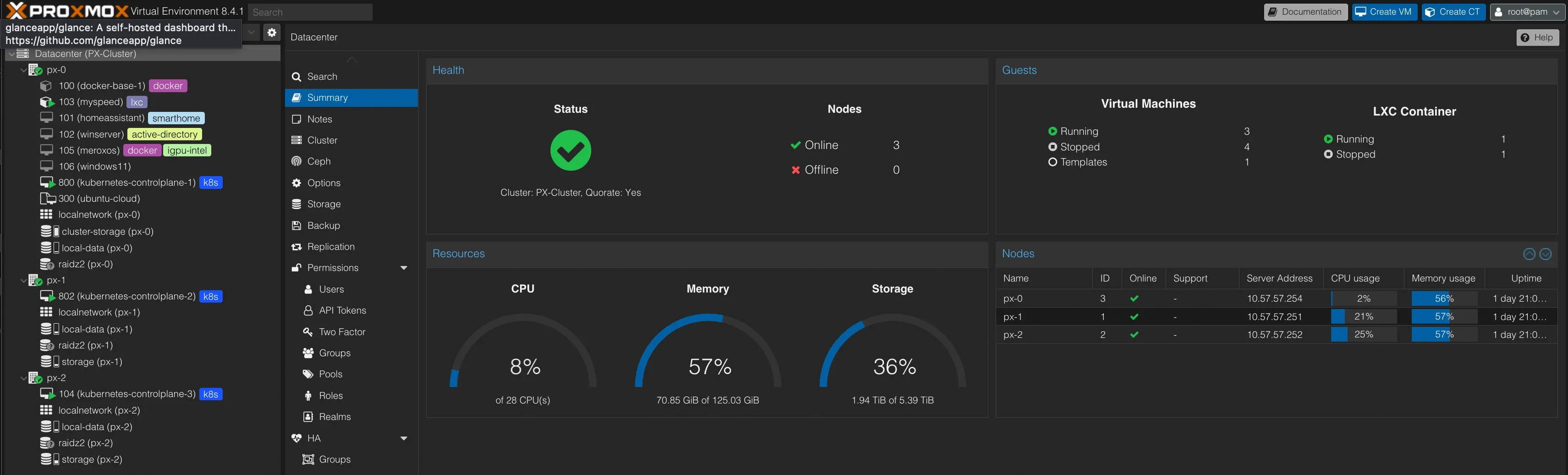

Proxmox Cluster

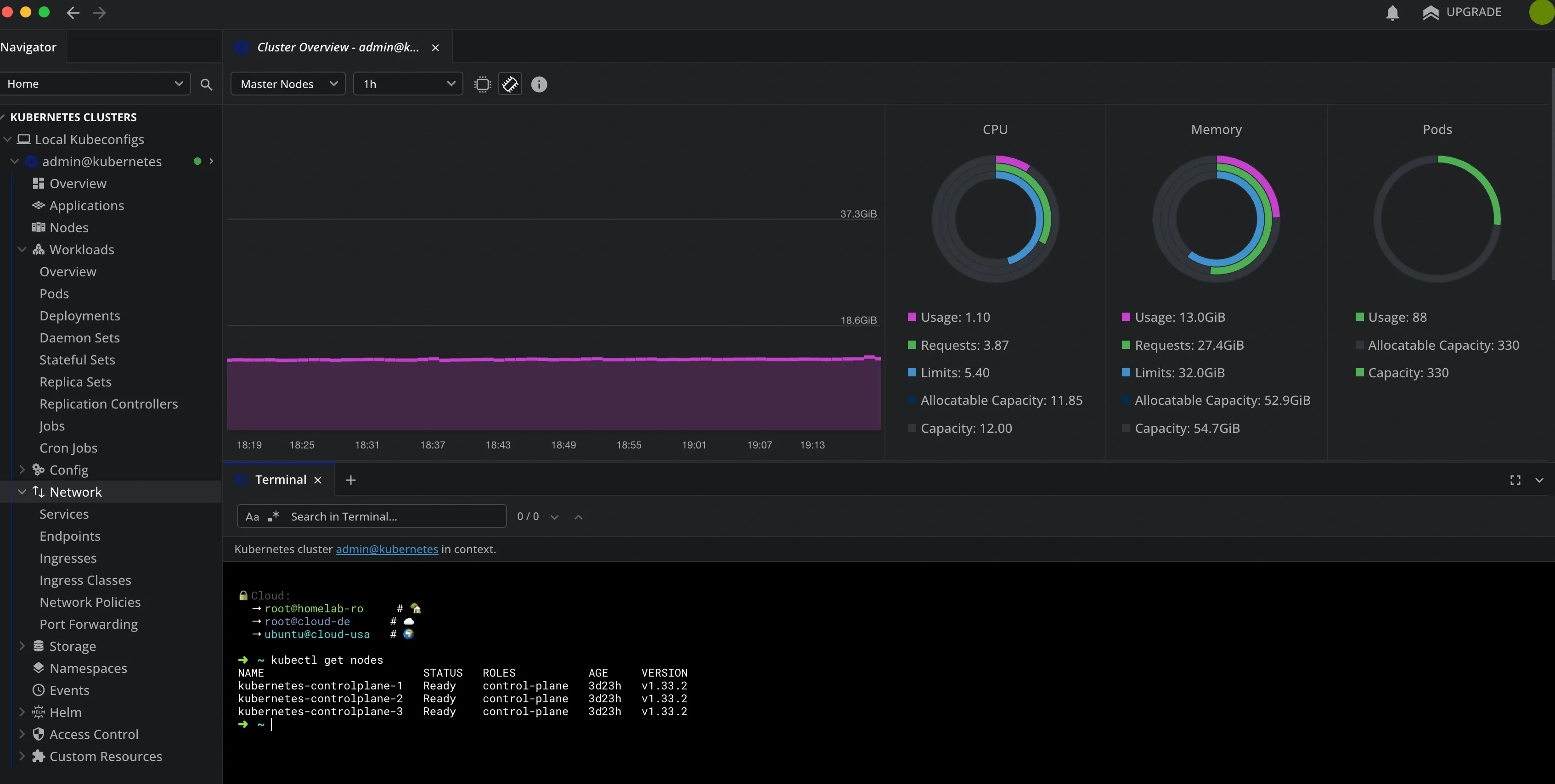

Three-node cluster across the mini PCs. Each node runs one Talos VM, so Kubernetes has HA across physical hosts with no single point of failure.

Nodes:

| Node | Device | CPU | RAM | Role |

|---|---|---|---|---|

| px-0 | Beelink GTi 13 | i9-13900H (20T) | 64GB | Primary — hosts K8s controlplane-1 |

| px-1 | OptiPlex #1 | i5-6500T (4T) | 32GB | K8s controlplane-2 |

| px-2 | OptiPlex #2 | i5-6500T (4T) | 32GB | K8s controlplane-3 |

Storage:

| Pool | Type | Used | Total |

|---|---|---|---|

| cluster-storage | ZFS | 713GB | 899GB |

| synology-nas | NFS | 985GB | 1.4TB |

| local-data | dir | 177GB | 812GB |

Current VMs:

| VM | Purpose | Specs | Status |

|---|---|---|---|

| kubernetes-controlplane-1 | K8s node (px-0) | 8vCPU/24GB | Running |

| kubernetes-controlplane-2 | K8s node (px-1) | 4vCPU/16GB | Running |

| kubernetes-controlplane-3 | K8s node (px-2) | 4vCPU/16GB | Running |

| Home Assistant | Smart home hub | 2vCPU/4GB | Running |

| Windows 10 | Lab / testing | 4vCPU/12GB | Stopped |

| Windows Server 2019 | AD Lab | 8vCPU/14GB | Stopped |

| Windows 11 | Remote desktop | 8vCPU/16GB | Stopped |

Intel Iris Xe GPU on px-0 is passed through to the kubernetes-controlplane-1 VM for Jellyfin hardware transcoding (Intel QuickSync). GPU passthrough guide →

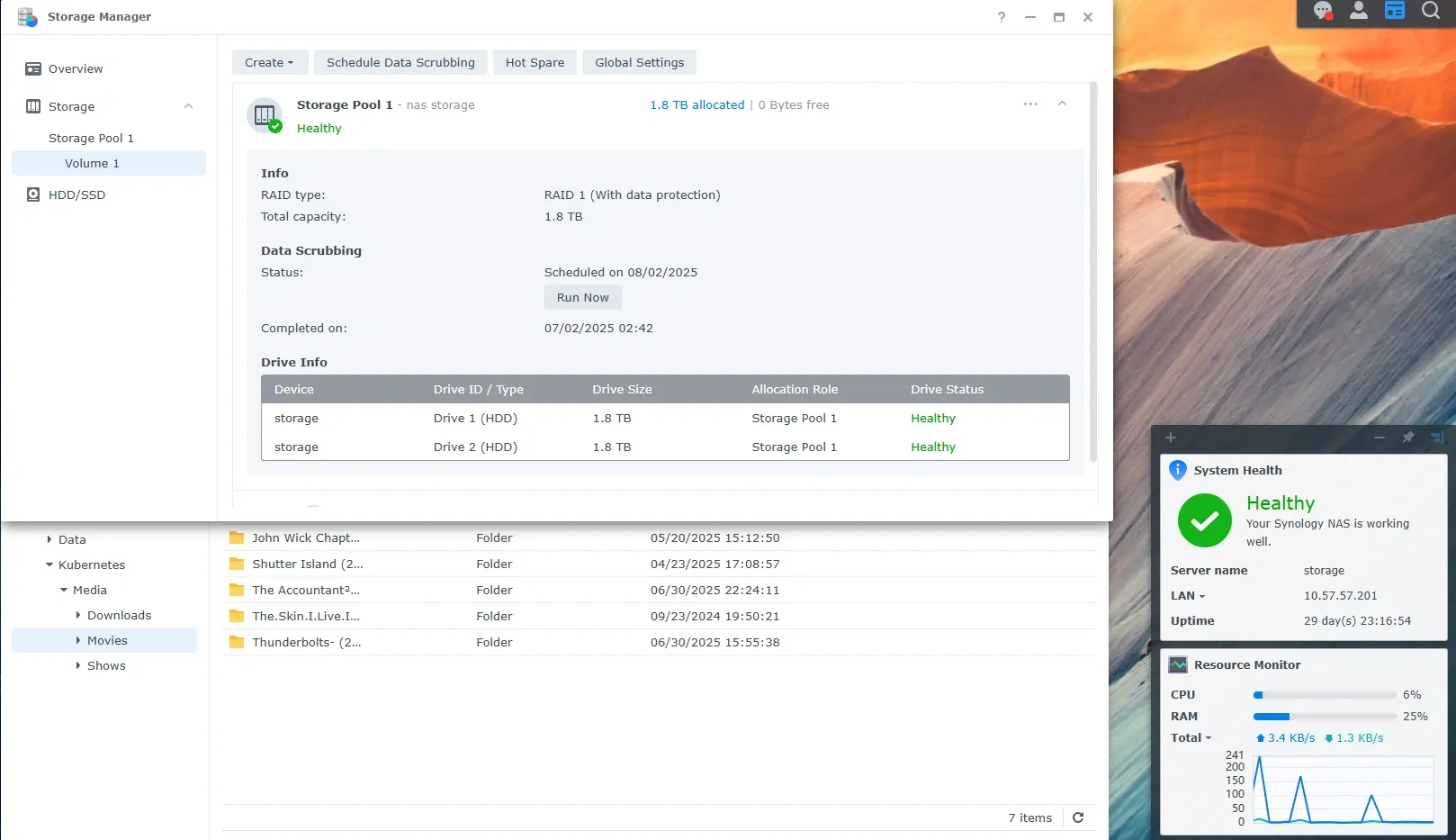

Synology DS223+

Dual purpose: NFS/SMB shares for the ARR stack (still experimenting with both protocols), and personal cloud via Synology Drive.

After 3 years of self-hosting Nextcloud, I switched. Better performance, native mobile apps that actually work, and zero maintenance. Sometimes the best self-hosted solution is the one you never have to think about.

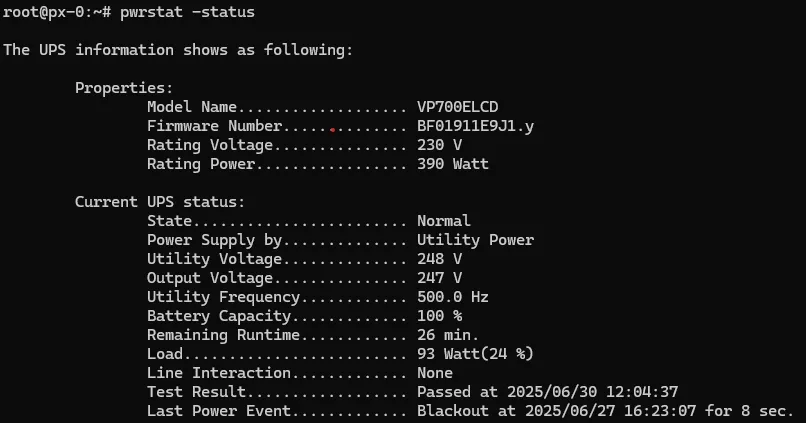

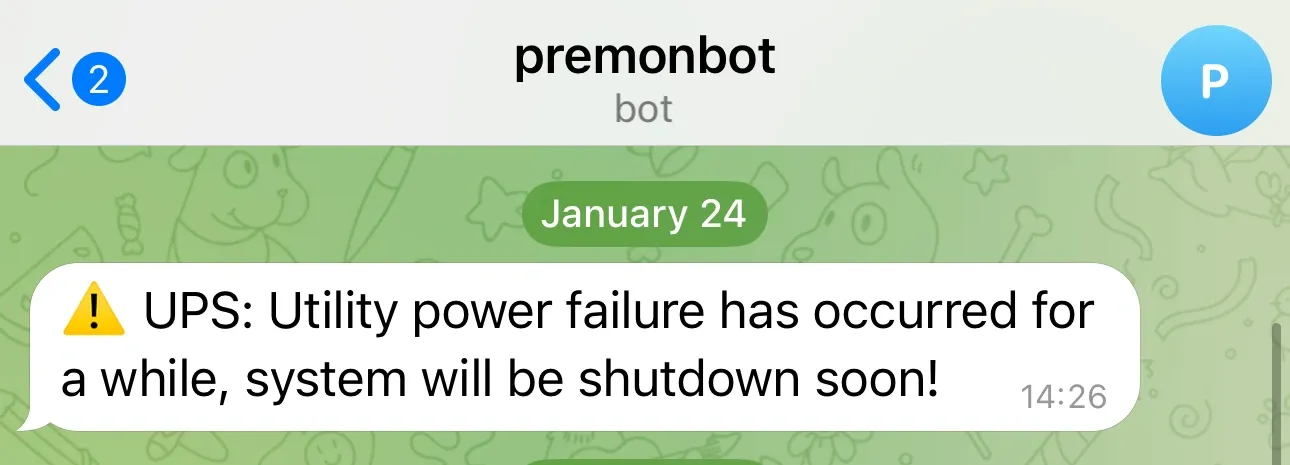

Power Management

The CyberPower UPS covers all mini PCs and network gear. When power fails, it triggers a cascading shutdown — Kubernetes nodes drain properly before Proxmox hosts go down.

| Feature | Implementation | Purpose |

|---|---|---|

| pwrstat | USB to GTi13 Pro | Automated shutdown orchestration |

| SSH Scripts | Custom automation | Graceful cluster shutdown |

| Monitoring | Telegram alerts | Real-time power notifications |

Note

The Dell R720 is in the rack but currently off — it’s running NixOS for learning and exploration, not part of the active production stack. iDRAC7 Enterprise gives remote console access when needed. R720 setup post →

Kubernetes

Fair warning: this is where I went full “because I can” mode. If you just want to run services, Docker is the right answer. But if you want to learn enterprise-grade container orchestration in your homelab, keep reading.

The starting point: onedr0p/cluster-template

Talos OS was the first immutable, declarative OS I’d run. After a few days of troubleshooting, I was sold.

Tip

Why Talos over K3s? Immutable OS means less maintenance, GitOps-first design, declarative everything, and it’s closer to what you’d run in production.

My infrastructure repo: github.com/meroxdotdev/infrastructure

Key customizations:

| Component | Modification | Reason |

|---|---|---|

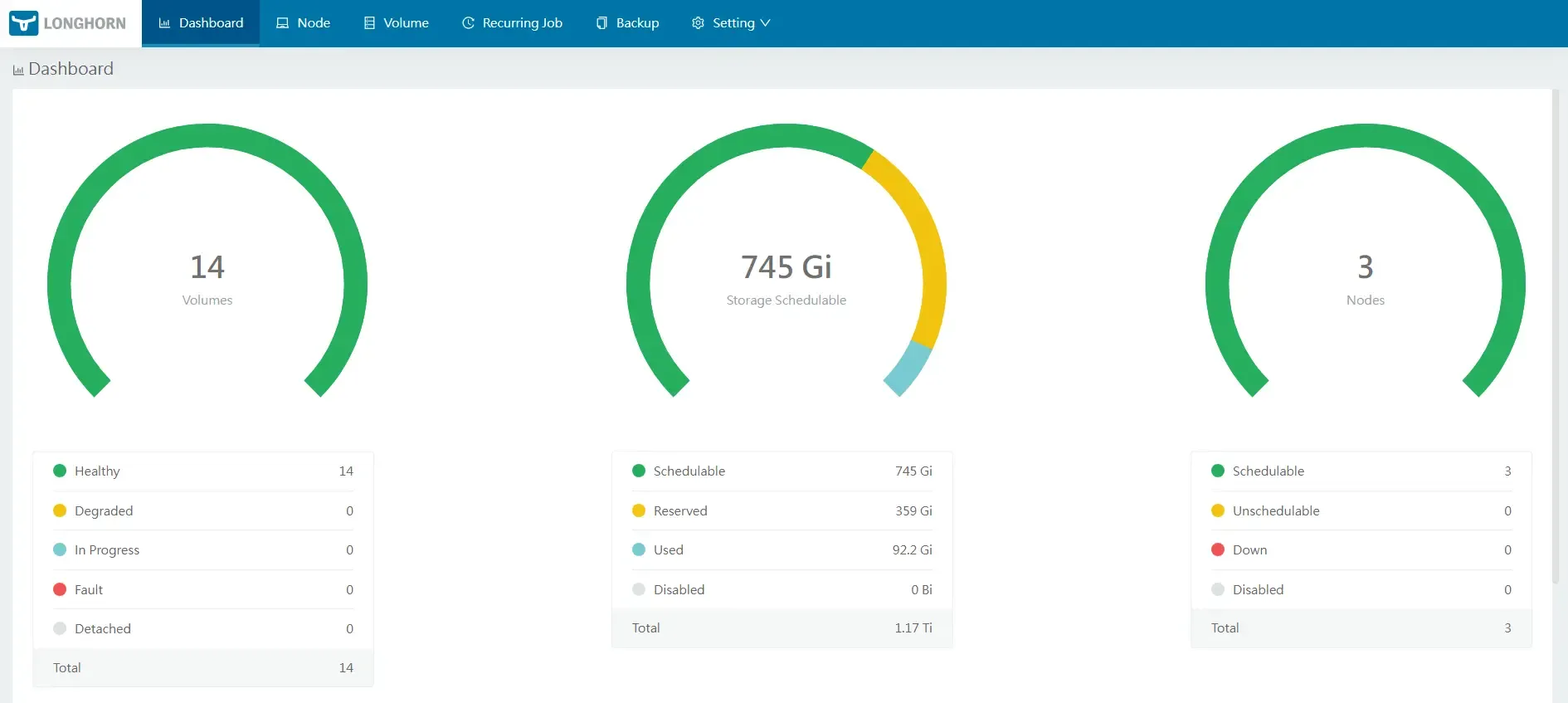

| Storage | Longhorn CSI | Simpler PV/PVC management |

| Talos Patches | Custom machine config | Longhorn requirements |

| Custom Image | factory.talos.dev | Intel iGPU + iSCSI support |

GitOps structure:

kubernetes/apps/├── cert-manager/ # TLS automation├── default/ # Production workloads├── flux-system/ # Flux operator + instance├── kube-system/ # Cilium, CoreDNS, NFS CSI, metrics-server├── network/ # k8s-gateway, Cloudflare tunnel + DNS├── observability/ # Prometheus, Grafana, Loki└── storage/ # Longhorn configuration

Deployed apps:

| App | Purpose | Notes |

|---|---|---|

| Radarr | Movie automation | NFS to Synology |

| Sonarr | TV automation | NFS to Synology |

| Prowlarr | Indexer manager | Central search |

| qBittorrent | Torrent client | Gluetun sidecar + SurfShark WireGuard VPN |

| Jellyseerr | Request management | Public via Cloudflare |

| Jellyfin | Media server | Intel QuickSync enabled |

| Homepage | Dashboard | |

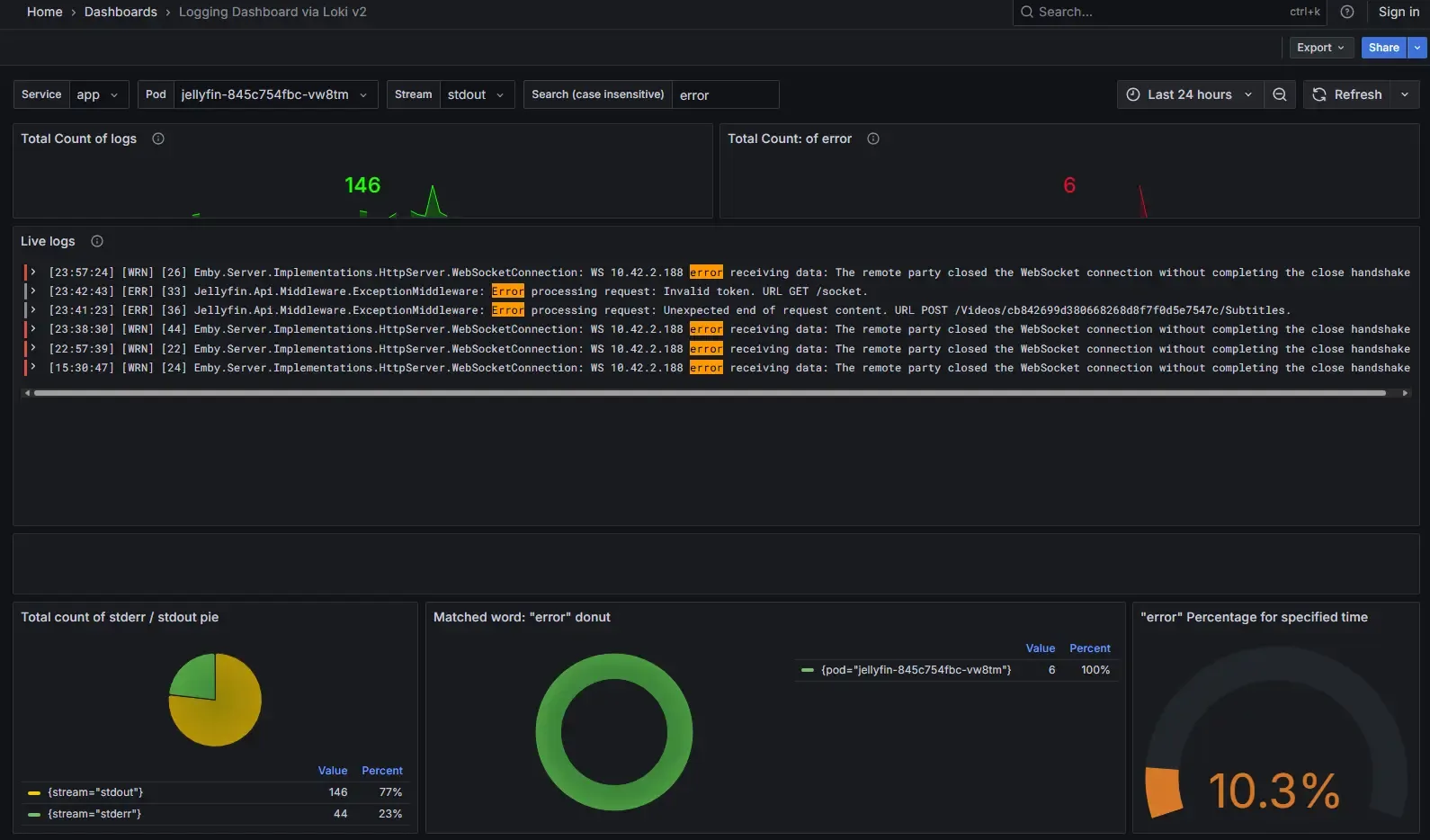

| Grafana | Metrics dashboards | |

| Prometheus + Alertmanager | Metrics collection + alerts | |

| Loki + Promtail | Log aggregation | |

| Netdata | Per-node system monitoring | DaemonSet — one agent per K8s node |

| cert-manager | TLS certificate automation | ACME via Let’s Encrypt |

The live dashboard is public — current service status at inside.merox.dev.

LoadBalancer IPs are handled by Cilium’s L2 announcement — a pool of addresses (10.57.57.100–120) announced directly on the LAN via ARP, no external load balancer needed. Services like qBittorrent, k8s-gateway, and the Cloudflare tunnel endpoint each get a dedicated IP from this pool.

Cluster Rebuild & Disaster Recovery

With declarative config for everything and Flux keeping state in Git, a full cluster rebuild takes ~20 minutes — provision the Talos VMs, bootstrap, and a single command restores all Longhorn volumes from S3 and reconciles every app. The full step-by-step procedure lives in DEPLOY.md.

# Phase 2 K8s restore — one command does everythingtask bootstrap:appstask restore:longhorntask restore:longhorn handles the full sequence automatically: patches the Longhorn BackupTarget CRD, restores all volumes from S3, creates PersistentVolumes with correct claimRefs, rebinds grafana/loki/prometheus PVCs to their restored data, fixes the Longhorn 1.11.2 duplicate HelmRelease issue, and force-reconciles all app HelmReleases. No manual steps.

The repo also includes a full DR automation toolkit:

| Script / Task | Purpose |

|---|---|

scripts/dr-preflight.sh | Pre-flight checks before DR — vault, age.key, Tailscale key, tools |

scripts/dr-verify.sh --phase 1|2|3|all | Post-DR verification for each phase |

scripts/garage-extract-creds.sh | Extract Garage S3 creds after Phase 1, auto-update vault |

scripts/gen-dr-talconfig.sh | Patch talconfig.yaml with DR IPs from Terraform outputs |

talos/terraform/ | Terraform for 3 DR Talos VMs on Proxmox (IDs 810–812) |

task dr:create-vms / dr:destroy-vms | Spin DR VMs up/down |

| Scenario | Where to look |

|---|---|

| Full rebuild (new hardware) | DEPLOY.md — Phase 1 (VPS) → Phase 2 (K8s) → Phase 3 (Agent) |

| Restore Longhorn volumes from S3 | task restore:longhorn — fully automated |

| New hardware (different IPs/disks) | Update talos/talconfig.yaml, cluster-vars.yaml, cilium/networks.yaml |

| Intel iGPU absent on new hardware | Remove gpu.intel.com/i915 from Jellyfin HelmRelease, disable device plugin |

Warning

Back up two things before decommissioning any node: age.key (losing it = losing all SOPS-encrypted secrets) and ~/.openclaw/.env (Anthropic API key + Telegram tokens).

Longhorn PVC backups land in Garage S3 on the Oracle VPS, so persistent data survives even if all three Proxmox nodes go down simultaneously. Restore procedure: Restoring from Longhorn Backups →

Cloud

The Oracle Cloud Free Tier Ampere A1 instance (4 vCPU / 24GB RAM / 200GB disk) is the off-site anchor of the entire setup. It’s not just a place to park Docker containers — it’s the external access layer, the backup target, and the recovery fallback.

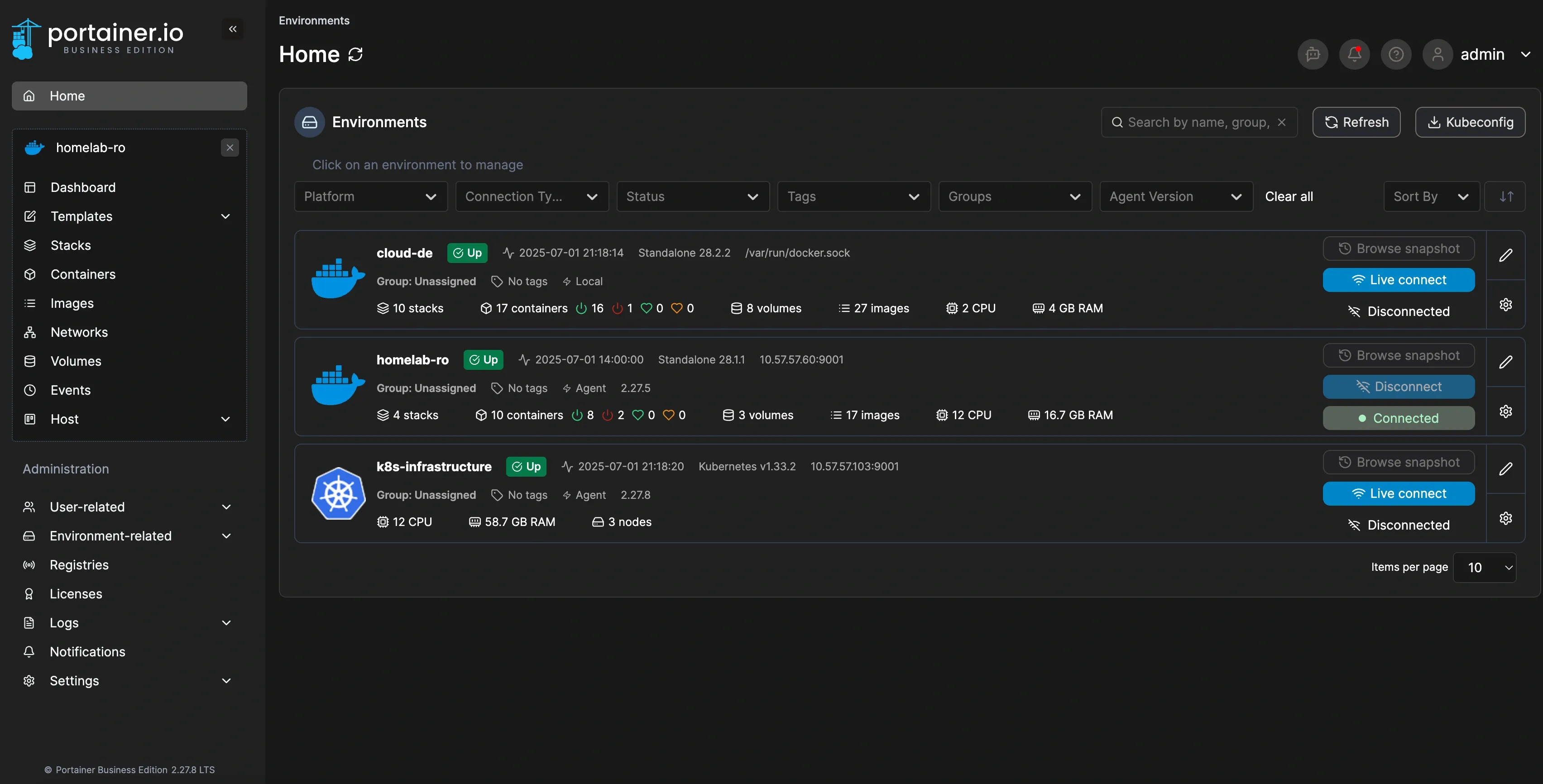

Everything on it is managed through a single Portainer instance at cloud.merox.dev:

Services

| Service | Purpose |

|---|---|

| Traefik | SSL termination for all VPS services |

| Pi-hole | Dedicated Tailscale split-DNS |

| Portainer | Container management |

| Authentik | Identity provider — SSO across all services |

| Guacamole | Remote desktop access via Cloudflare Tunnel |

| Joplin Server | Self-hosted notes sync |

| Uptime Kuma | Service uptime monitoring |

| Glances | System resource monitoring |

| Garage S3 + WebUI | S3-compatible object storage for Longhorn backups |

| Rsync endpoint | Off-site backup target from Synology NAS |

| OpenClaw | AI infrastructure agent (Telegram → kubectl/flux/docker) |

Authentik acts as the identity layer for everything — Google SSO, proxy authentication for Guacamole, OAuth2 for Portainer, and a Kubernetes outpost for cluster services. Full Authentik setup →

OpenClaw is a self-built AI agent that connects Telegram to the infrastructure — kubectl, flux, and docker commands via chat, from anywhere on the Tailscale mesh. Full OpenClaw setup →

Tip

The Oracle instance doubles as a Tailscale exit node — useful for routing traffic through the US when needed.

Disaster Recovery

Oracle can and does terminate Always Free instances without warning. I don’t depend on that not happening.

The full stack is codified in Ansible roles + Terraform under vps/: one make dr-full command provisions an on-demand Hetzner VPS and deploys every service in ~15 minutes. Hetzner is not a standing server — it’s spun up only when Oracle is lost, then torn down after migration back. Cloudflare Tunnel reconnects automatically (same token), Tailscale rejoins the mesh (same auth key), and data volumes restore from the Synology rsync backup.

make dr-preflight # checks vault, age.key, Tailscale key, toolsmake dr-full # terraform apply + ansible deploy (~15 min)./scripts/dr-verify.sh --phase 1 # post-DR verificationmake dr-full └─ terraform apply (~2 min) — new Hetzner server, inventory updated └─ ansible setup (~12 min) — full stack deployed └─ data restore (~30 min) — volumes from Synology rsyncFor the full walkthrough including security hardening, Ansible vault setup, and the Terraform config: Oracle Cloud Free Tier: Building a Full DR Plan →

Backup

Every layer has an off-site copy:

Kubernetes PVCs │ ▼ Longhorn → S3Garage S3 (Oracle Cloud VPS) ◄── Synology NAS rsync

Longhorn PVCs → Garage S3 on Oracle (S3-compatible). Currently ~28GB used out of ~98GB free. Garage replaced MinIO after MinIO discontinued their Docker images and left older builds with known CVEs. MinIO → Garage migration →

Synology NAS → rsync to the same Oracle VPS on a schedule — ~26GB of Docker volumes, configs, and data. Off-site, encrypted. Synology backup setup →

Synology local redundancy — 2× 2TB in RAID1 for the primary NAS data.

For the full backup philosophy, retention policies, and restore procedures: 3-2-1 Backup Strategy →