This is the setup I use to run a full media automation stack on my Kubernetes cluster. It covers Jellyfin for streaming, Radarr and Sonarr for media management, Prowlarr for indexers, qBittorrent for downloads, and Gluetun for VPN tunneling. Config lives on Longhorn; media is stored on a Synology NAS DS223 mounted via NFS 4.1 as a PersistentVolume.

Components

| Application | Role |

|---|---|

| Jellyfin | Media streaming |

| Radarr | Movie library management |

| Sonarr | TV show management |

| Prowlarr | Torrent indexer manager |

| qBittorrent | Download client |

| Gluetun | VPN sidecar for qBittorrent |

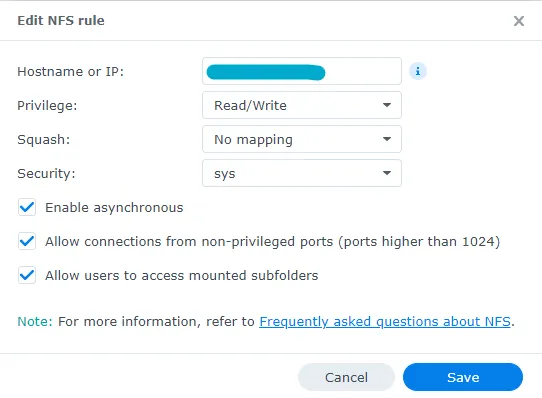

Synology NAS NFS Setup

If you’re using a Synology NAS, this is the NFS share rule I use — applied before mounting on the Kubernetes side.

PersistentVolumes and PVCs

Media Storage

Create nfs-media-pv-and-pvc.yaml:

apiVersion: v1kind: PersistentVolumemetadata: name: jellyfin-videosspec: capacity: storage: 400Gi accessModes: - ReadWriteOnce nfs: path: /volume1/server/k3s/media server: storage.merox.cloud persistentVolumeReclaimPolicy: Retain mountOptions: - hard - nfsvers=4.1 storageClassName: ""---apiVersion: v1kind: PersistentVolumeClaimmetadata: name: jellyfin-videos namespace: mediaspec: accessModes: - ReadWriteOnce resources: requests: storage: 400Gi volumeName: jellyfin-videos storageClassName: ""kubectl apply -f nfs-media-pv-and-pvc.yamlDownload Storage

Create nfs-download-pv-and-pvc.yaml:

apiVersion: v1kind: PersistentVolumemetadata: name: qbitt-downloadspec: capacity: storage: 400Gi accessModes: - ReadWriteOnce nfs: path: /volume1/server/k3s/media/download server: storage.merox.cloud persistentVolumeReclaimPolicy: Retain mountOptions: - hard - nfsvers=4.1 storageClassName: ""---apiVersion: v1kind: PersistentVolumeClaimmetadata: name: qbitt-download namespace: mediaspec: accessModes: - ReadWriteOnce resources: requests: storage: 400Gi volumeName: qbitt-download storageClassName: ""kubectl apply -f nfs-download-pv-and-pvc.yamlLonghorn PVC for App Configs

Create app-config-pvc.yaml (repeat for each app):

apiVersion: v1kind: PersistentVolumeClaimmetadata: name: app # radarr for example namespace: mediaspec: accessModes: - ReadWriteOnce storageClassName: longhorn resources: requests: storage: 5Gikubectl apply -f app-config-pvc.yamlDanger

You need a separate PVC for each application: Jellyfin, Sonarr, Radarr, Prowlarr, and qBittorrent.

Deployments

Jellyfin

Create jellyfin-deployment.yaml:

apiVersion: apps/v1kind: Deploymentmetadata: name: jellyfin namespace: mediaspec: replicas: 1 selector: matchLabels: app: jellyfin template: metadata: labels: app: jellyfin spec: containers: - name: jellyfin image: jellyfin/jellyfin volumeMounts: - name: config mountPath: /config - name: videos mountPath: /data/videos ports: - containerPort: 8096 volumes: - name: config persistentVolumeClaim: claimName: jellyfin-config - name: videos persistentVolumeClaim: claimName: jellyfin-videoskubectl apply -f jellyfin-deployment.yamlSonarr

apiVersion: apps/v1kind: Deploymentmetadata: name: sonarr namespace: mediaspec: replicas: 1 selector: matchLabels: app: sonarr template: metadata: labels: app: sonarr spec: containers: - name: sonarr image: lscr.io/linuxserver/sonarr env: - name: PUID value: "1057" - name: PGID value: "1056" volumeMounts: - name: config mountPath: /config - name: videos mountPath: /tv - name: downloads mountPath: /downloads ports: - containerPort: 8989 volumes: - name: config persistentVolumeClaim: claimName: sonarr-config - name: videos persistentVolumeClaim: claimName: jellyfin-videos - name: downloads persistentVolumeClaim: claimName: qbitt-downloadRadarr

apiVersion: apps/v1kind: Deploymentmetadata: name: radarr namespace: mediaspec: replicas: 1 selector: matchLabels: app: radarr template: metadata: labels: app: radarr spec: containers: - name: radarr image: lscr.io/linuxserver/radarr env: - name: PUID value: "1057" - name: PGID value: "1056" volumeMounts: - name: config mountPath: /config - name: videos mountPath: /movies - name: downloads mountPath: /downloads ports: - containerPort: 7878 volumes: - name: config persistentVolumeClaim: claimName: radarr-config - name: videos persistentVolumeClaim: claimName: jellyfin-videos - name: downloads persistentVolumeClaim: claimName: qbitt-downloadProwlarr

apiVersion: apps/v1kind: Deploymentmetadata: name: prowlarr namespace: mediaspec: replicas: 1 selector: matchLabels: app: prowlarr template: metadata: labels: app: prowlarr spec: containers: - name: prowlarr image: lscr.io/linuxserver/prowlarr env: - name: PUID value: "1057" - name: PGID value: "1056" volumeMounts: - name: config mountPath: /config ports: - containerPort: 9696 volumes: - name: config persistentVolumeClaim: claimName: prowlarr-configqBittorrent (standalone)

Warning

qBittorrent v5 renamed the API endpoints /torrents/pause and /torrents/resume to /torrents/stop and /torrents/start. If you use any scripts or integrations that call the qBittorrent API directly, update them before upgrading from v4.

apiVersion: apps/v1kind: Deploymentmetadata: name: qbittorrent namespace: mediaspec: replicas: 1 selector: matchLabels: app: qbittorrent template: metadata: labels: app: qbittorrent spec: containers: - name: qbittorrent image: lscr.io/linuxserver/qbittorrent resources: limits: memory: "2Gi" requests: memory: "512Mi" env: - name: PUID value: "1057" - name: PGID value: "1056" volumeMounts: - name: config mountPath: /config - name: downloads mountPath: /downloads ports: - containerPort: 8080 volumes: - name: config persistentVolumeClaim: claimName: qbitt-config - name: downloads persistentVolumeClaim: claimName: qbitt-downloadqBittorrent with Gluetun

If you want to route qBittorrent traffic through a VPN, use this version instead — Gluetun runs as a sidecar and shares the network namespace.

apiVersion: apps/v1kind: Deploymentmetadata: name: qbittorrent namespace: mediaspec: replicas: 1 selector: matchLabels: app: qbittorrent template: metadata: labels: app: qbittorrent spec: containers: - name: qbittorrent image: lscr.io/linuxserver/qbittorrent resources: limits: memory: "2Gi" requests: memory: "512Mi" env: - name: PUID value: "1057" - name: PGID value: "1056" volumeMounts: - name: config mountPath: /config - name: downloads mountPath: /downloads ports: - containerPort: 8080

- name: gluetun image: ghcr.io/qdm12/gluetun:v3.40.0 env: - name: VPN_SERVICE_PROVIDER value: "surfshark" - name: VPN_TYPE value: "wireguard" - name: SERVER_COUNTRIES value: "Netherlands" - name: WIREGUARD_ADDRESSES value: "10.14.0.2/16" # from SurfShark WireGuard config — Address field - name: FIREWALL_INPUT_PORTS value: "50413,8080" # torrent port + web UI port - name: FIREWALL_OUTBOUND_SUBNETS value: "10.0.0.0/8" - name: DNS_KEEP_NAMESERVER value: "on" - name: DOT value: "off" - name: WIREGUARD_PRIVATE_KEY valueFrom: secretKeyRef: name: surfshark-secret key: WIREGUARD_PRIVATE_KEY securityContext: capabilities: add: - NET_ADMIN volumeMounts: - name: tun mountPath: /dev/net/tun

volumes: - name: config persistentVolumeClaim: claimName: qbitt-config - name: downloads persistentVolumeClaim: claimName: qbitt-download - name: tun hostPath: path: /dev/net/tunNote

I use SurfShark with WireGuard — faster than OpenVPN and natively supported by Gluetun. Generate your WireGuard key from the SurfShark dashboard under VPN → Manual Setup → WireGuard. Note: SurfShark does not support port forwarding, so peers cannot initiate inbound connections — downloads still work fine but may be slower without seeding peers.

ClusterIP Services

Each app needs a ClusterIP service so Traefik can route to it internally. Create app-service.yaml per application:

apiVersion: v1kind: Servicemetadata: name: app # radarr for example namespace: mediaspec: type: ClusterIP ports: - port: 80 targetPort: 7878 selector: app: app # radarr for examplekubectl apply -f app-service.yamlTraefik Middleware

Create default-headers-media.yaml:

apiVersion: traefik.io/v1alpha1kind: Middlewaremetadata: name: default-headers-media namespace: mediaspec: headers: browserXssFilter: true contentTypeNosniff: true forceSTSHeader: true stsIncludeSubdomains: true stsPreload: true stsSeconds: 15552000 customFrameOptionsValue: SAMEORIGIN customRequestHeaders: X-Forwarded-Proto: httpskubectl apply -f default-headers-media.yamlIngressRoutes

Create app-ingress-route.yaml per application:

apiVersion: traefik.io/v1alpha1kind: IngressRoutemetadata: name: app # radarr for example namespace: media annotations: kubernetes.io/ingress.class: traefik-externalspec: entryPoints: - websecure routes: - match: Host(`movies.merox.cloud`) # change to your domain kind: Rule services: - name: app # radarr for example port: 80 - match: Host(`movies.merox.cloud`) # change to your domain kind: Rule services: - name: app # radarr for example port: 80 middlewares: - name: default-headers-media tls: secretName: mycert-tls # change to your cert namekubectl apply -f app-ingress-route.yamlDanger

Add the hostname declared in your IngressRoute to your DNS server before applying.

Manifest Files

All manifests are available here — copy and deploy what you need: